Resilient Write: Giving Coding Agents a Write Path That Doesn’t Break

Apr 12, 2026 Tools MCPLLMAgentsPythonSecurity LLM Agents

If you’ve spent any time watching an LLM coding agent work, you’ve seen it happen: the agent generates a perfectly good file, calls Write, and… nothing. The content vanishes. The agent retries the exact same payload. Five times. Then it gives up or cobbles together a cat >> file.tex <<EOF workaround in the shell.

This happened to me in April 2026 while an agent was producing a telemetry report. A LaTeX document containing redacted HTTP headers like Authorization: Bearer sk-ant-oat01-{REDACTED} got silently rejected by the host tool’s content filter. The prefix sk-ant- was enough to trigger the regex. No error. No feedback. Just silence and wasted tokens.

That incident motivated Resilient Write — an MCP server that sits between the agent and the filesystem, making writes durable, auditable, and recoverable.

The problem in five failure modes#

Every agent write can break in one of five ways:

- Silent rejection — content filters block the payload with no signal

- Draft loss — the rejected content exists only in model memory and is gone

- Retry thrashing — the agent retries identical rejected content indefinitely

- Opaque errors — when errors do surface, they’re unstructured strings the agent can’t branch on

- Session fragility — if the session is interrupted, all in-flight state is lost

Most agent tooling treats these as edge cases. They’re not. In my experience, content-filter rejections alone affect roughly 15% of writes that contain anything resembling a token or credential string — even redacted ones.

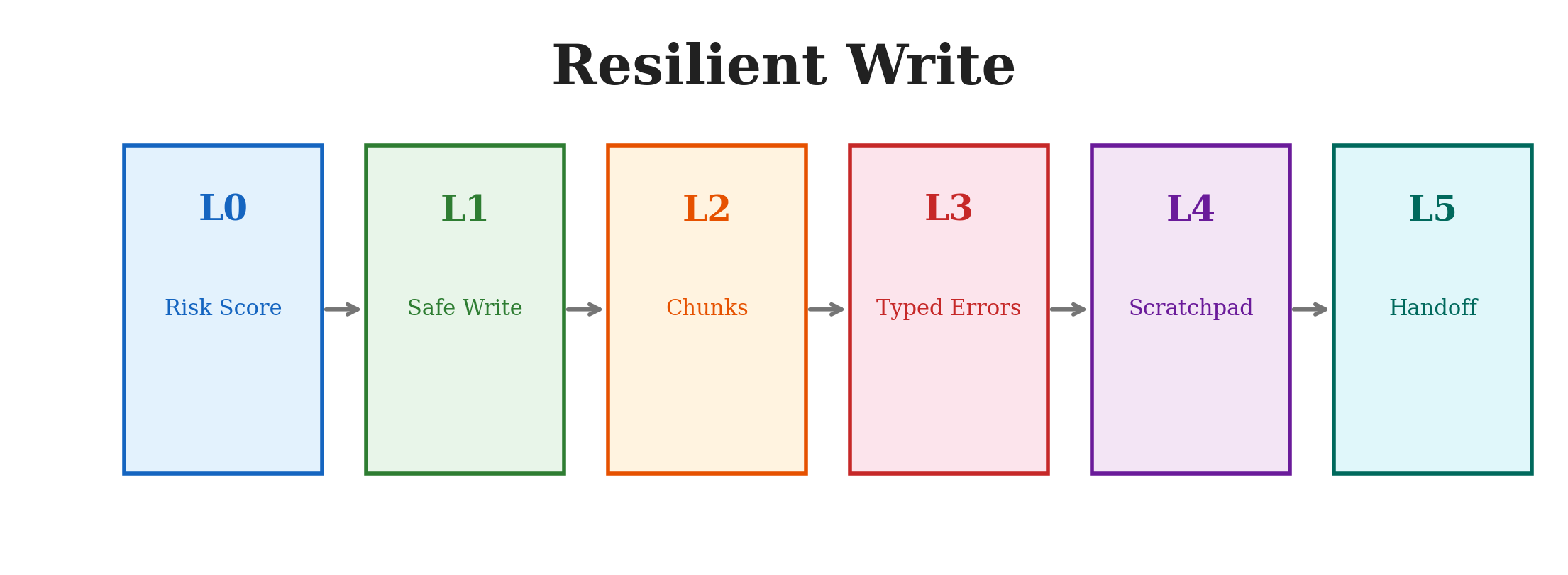

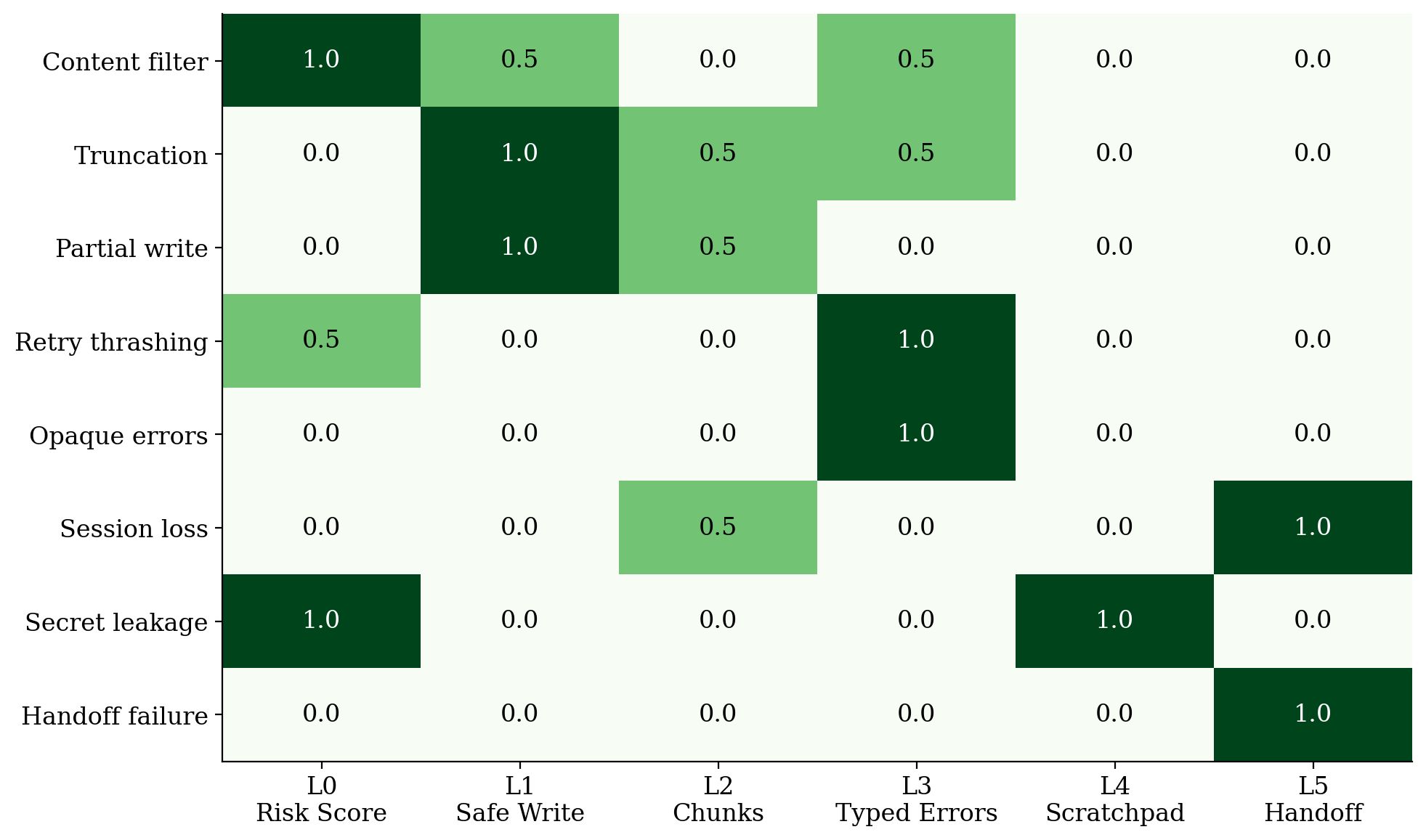

Six layers, each solving one problem#

Resilient Write is structured as six orthogonal layers. Each one targets exactly one failure mode:

| Layer | Tool | What it does |

|---|---|---|

| L0 | rw.risk_score | Pre-flight regex classifier. Predicts whether content will trigger a filter before you try to write it. |

| L1 | rw.safe_write | Atomic write: temp file, fsync, SHA-256 verify, rename. Never leaves a half-written file. |

| L2 | rw.chunk_* | Break large writes into numbered chunks. If chunk 5 fails, chunks 1–4 are already on disk. |

| L3 | Error envelope | Every failure returns structured JSON: {error, reason_hint, suggested_action, retry_budget}. |

| L4 | rw.scratch_* | Content-addressed scratchpad for secrets that shouldn’t enter the workspace tree. |

| L5 | rw.handoff_* | Writes a HANDOFF.md envelope so a fresh agent can resume where the last one stopped. |

The layers are independent. You can use just L1 + L5 and get most of the value.

The risk scorer#

L0 is a pure-function classifier — no LLM, no network, under 50ms on 100KB. It maintains seven pattern families (API keys, GitHub PATs, JWTs, PEM blocks, AWS secrets, PII, binary blobs), each with a weighted score. Multiple hits in the same family dampen sub-linearly to avoid false positives on files that legitimately handle test credentials.

The output is a structured verdict: safe, low, medium, or high, plus a list of detected patterns (each truncated to 16 characters so the classifier’s own output doesn’t leak the secret it found).

If your workspace legitimately handles tokens (e.g., a security testing project), a .resilient_write/policy.yaml file lets you disable families or adjust thresholds.

Typed errors that agents can reason about#

This is the part that makes the biggest practical difference. When a write fails, the agent gets back:

{

"ok": false,

"error": "blocked",

"reason_hint": "content_filter",

"detected_patterns": ["api_key"],

"suggested_action": "redact",

"retry_budget": 2

}

The agent can now branch on the error. suggested_action: "redact" means “remove the flagged patterns and try again.” retry_budget: 2 means “you have two more attempts before I cut you off.” And crucially, content_filter is marked as not retriable — preventing the exact retry-thrashing loop that started this project.

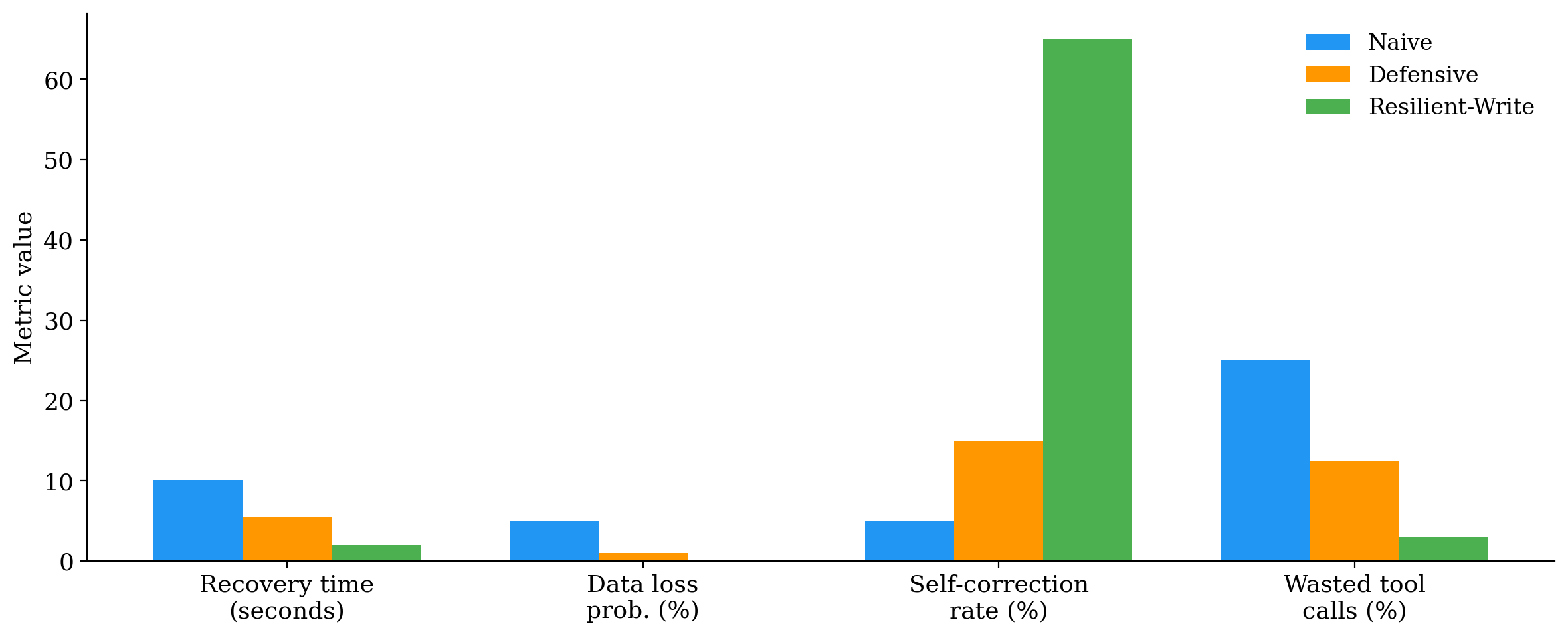

How it performed#

I replayed the original failed session with Resilient Write interposed. The difference:

| Metric | Without | With |

|---|---|---|

| Write attempts | 6 | 2 |

| Content lost | yes | no |

| Agent self-corrected | no | yes |

| Manual intervention needed | yes | no |

The agent called rw.risk_score first, got a high verdict with api_key detected, redacted the match, and the subsequent rw.safe_write succeeded on the first attempt.

Across the board, compared to naive file I/O:

Three tools born from dogfooding#

While writing the academic paper for this project, I used Resilient Write’s own chunked-write protocol to compose the LaTeX document. Three problems surfaced that the original six layers didn’t cover:

rw.chunk_preview— I accidentally appended to a stale chunk session from a prior attempt, producing a file with a duplicate preamble. A dry-run compose would have caught this.rw.validate— A missing\layermacro definition caused a LaTeX build failure. Format-aware validation (balanced braces, matched environments) would have flagged it at preview time.rw.analytics— No visibility into write patterns. How many writes per session? Which files are hot? How fast is the agent writing? The journal had all this data; it just needed a query tool.

All three shipped. The test suite went from 144 to 186 tests.

Making agents actually use it#

The hardest part wasn’t building the tool — it was making agents prefer it over raw Write calls. MCP tool registration makes the tools available, but agents default to what they know.

The solution is a CLAUDE.md file (for Claude Code) or .cursorrules (for Cursor) that maps task types to tools:

| Task | Use this |

|---|---|

| Create/overwrite a file | rw.safe_write |

| Write a large file (>5KB) | rw.chunk_append then rw.chunk_compose |

| Check for secrets | rw.risk_score |

| Store sensitive material | rw.scratch_put |

| End of session | rw.handoff_write |

No code changes to the agent. Just a convention file.

Install#

pip install resilient-write

Or run directly as an MCP server:

uvx resilient-write

Add to your MCP config:

{

"mcpServers": {

"resilient-write": {

"command": "uvx",

"args": ["resilient-write"],

"env": { "RW_WORKSPACE": "/path/to/project" }

}

}

}

Links#

- PyPI: pypi.org/project/resilient-write

- GitHub: github.com/jayluxferro/resilient-write

- Paper: Resilient Write: A Six-Layer Durable Write Surface for LLM Coding Agents — arXiv:2604.10842

The code is MIT licensed. The paper covers the architecture, scoring function, evaluation, and design tradeoffs in detail.